How to measure agile success?

Discover how agile can enhance your project's success — read on for expert insights and tangible metrics.

Introduction

Adopting an agile approach is bringing success to many organisations. But how can we measure how successful agile is? What does success look like, and how do you get there?

At agileKRC we specialise in helping organisations achieve an agile transformation by helping them adopt agile ways of working or improving their existing agile capability.

What we typically experience is a very positive view of agile and that it is making things better. It is often improving how people are working.

Video

In this video, Keith Richards, the Founder of agileKRC explains what you need to look at when measuring success, what needs measuring and how.

The questions Keith answers in the video include:

- What do you need to look at when measuring success?

- How do you to decide what metrics suit your organisation?

- Why are there several forms of success?

- How does the success of what you produce relate to the success of agile?

- How does this relate to organisational success?

- Who needs to help plan this, monitor this and control this?

- What needs to be in place to make it happen?

- How do you know when you have actually got there?

Download PDF

To see a PDF version of the presentation used in this video, click the button below.

Difficult to quantify success

However, what we also typically find is that it is often hard to work out how much things have improved in a measurable and quantifiable way.

I recently read an article that talked about how agile had saved a large amount of money (hundreds of millions of pounds) on one project alone.

However, the more I read the more I became unclear as to what had made the saving. Was it agile or was it the new technology being used? I am sure agile contributed to a better final product and/or a speedier delivery but how much did it contribute?

This wasn’t easy to see. Using a waterfall approach could also have resulted in huge savings but what was the difference? How much better was agile?

One thing for certain in my view was that some of the savings (if not most of them) were nothing to do with agile.

You can’t control what you can’t measure

This is one example of how hard it is to measure agile success. Behind this is a potentially very serious problem. If you cannot tell how successful agile is, should you be doing it? By this I mean (and to quote Tom DeMarco) ‘you can’t control what you can’t measure’.

Separate the benefits

One thing to do immediately (if you have not done so already) is to separate out the benefits derived from a piece of work or project from the benefits of using agile. If you ask your Financial Director or CFO which they prefer, getting agile right or getting the business case right, you will only get one answer.

Quicker, better, cheaper

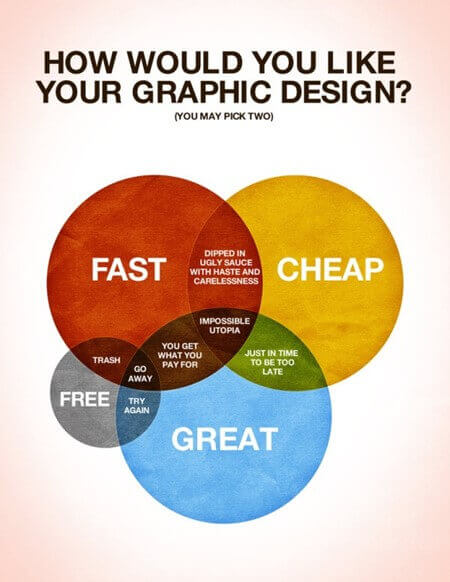

Another thing is to look at the big picture view of your organisation (or maybe it is just your department). What is it trying to achieve? Is it trying to be quicker, better or cheaper? Although this is a little simplistic it is a good starting point.

What is really important to your organisation? And remember, if you are saying ‘all three’ (quicker, better, cheaper) then you will struggle because they impact and compete with each other. As the sign in a blacksmith’s shop said ‘Quick, cheap or well-built – pick two!’.

Unanswered questions

This video is a recording of a webinar. After the webinar there were several questions left unanswered. These have been included below.

Registration question results: Do you have clearly defined success criteria for your agile transition?

| Yes | Somewhat | Not really | No | None of the above |

| 8% | 21% | 21% | 36% | 14% |

Webinar poll 1 result: “What are you trying to achieve with agile?” (choose more than one)

| Faster delivery | Delivering what the customer really wants | Building a better product technically | Reducing costs | Something else |

| 56% | 83% | 37% | 32% | 5% |

Webinar poll 2 result: “How much has your organisation invested in agile this year”

| Less than EUR 10,000 | 40% |

| Between EUR 10,000 and 25,000 | 16% |

| Between EUR 25,000 and 100,000 | 18% |

| More than EUR 100,000 | 26% |

Before getting into the specific questions I would like to thank Ian Koenig who pointed out that measuring technical debt can be a useful agile measure. Ian highlighted the fact that a lot of agile projects suffer from a lack of upfront planning.

I would add that how much planning (at agileKRC what we call it Enough Design Up-Front or ‘EDUF’) is always a judgement call. Too much and it has a negative effect, too little and the same can apply.

We also received an excellent graphic that highlighted the point I was making about competing constraints. I mentioned a sign in a Blacksmith’s. Our thanks to Jon Chambers of Legal Aid for this – having worked with many creative agencies I think this is great!

We are trying to implement DevOps and Agile mode of delivery in my organisation. There is lot of emphasis on reduced documentation and relying on developer’s knowledge. How will this work if there is a problem in a system, say 2 years after it is delivered? Does the lack of documentation not impact the support aspect of the systems?

It is key to distinguish between the words ‘reduced’ and ‘lack of’, If a script is written in such a way that it is self-documenting then great. What you need is ‘enough’ documentation as opposed to ‘no’ documentation. Some people refer to MVD ‘Minimum Viable Documentation’. It is the same thing as technical debt really. Do it now or you will end up paying for it later.

I use backlog estimates and velocity to measure project progress, but this isn’t popular with the development team; is this a valid approach?

Yes, it is. Well I say ‘yes’ as in, what you are doing is fine. To be slightly pedantic I would say this is more about planning than monitoring though. Have a chat with your developers – see if you can find out what their problem is.

How do you get teams to change from an Agile framework that is not working (Scrum), to one that does work (DSDM), even though they insist that Scrum is best?

Educate and inform. Find out what problems they are having and see if DSDM can solve it. One classic mistake is to take Scrum outside of its comfort zone. It is great at evolving a product, but it needs a lot more added to it if you are running a project. Get into a discussion. Do not get into a method debate or worst still ‘method wars’.

What are the agile success metrics that we need to capture, and can you give some guidelines?

Watch the video – that is what it was all about.

For a distributed team, do we need to have a physical board?

Physical and low-tech is always best in my opinion. If you are not in the same room though you must improvise and recreate this e.g. using a webcam or photos. Ultimately though you may need to go for technology (e.g. electronic Kanban boards).

How do you measure the benefits of collaboration (e.g. introducing new collaborative tools)?

With difficulty, but as I kept focussing on during the webinar, you must measure it somehow. A really simple way is ‘marks out of ten’. You need to be really tight on the survey question (e.g. on a scale from one to ten – how do you feel collaboration is working in our team?’). Although this is subjective it still has merit. Quite simply – how can you tell if the tool is working?

How often should we measure success? Maybe there are predictable milestone like stages that deserve to be assessed. For example, 1 Pre-project, 2 During project, 3 Implementation / hand over, 4 post-implementation / acceptability, and 5 longer term benefits? All of these might require different criteria. Some assess the project other assess the outcomes.

I suggest you measure agile frequently – I would say little and often. But your idea of doing it at key points is also valid – this gives a bit of formality to it and ensures that it gets done and nothing gets missed. Like I said in the webinar, be careful what you measure. You do not want to measure ‘agile’ and miss out on measuring ‘outcomes’.

Have you seen any good implementations of Agile in hardware centric environments?

Yes. The skill is to get the hardware and the software to line up. It is easier for software than hardware normally, but I think agile helps here a lot with its timeboxing. This helps with things like dependency planning.

How Agile success is achieved in organisations

Agile success depends on Agile principles, Agile methodologies, and Agile project management. Companies like Microsoft, Spotify, and Google have adopted Agile methods to drive Agile achievement and Agile improvement. Agile transformation success relies on feedback, transparency, and collaboration among employees, product owners, and stakeholders. Organisations experience Agile delivery success and Agile management success when Agile frameworks like Scrum, Kanban, and DSDM are implemented at scale.

Key factors for Agile effectiveness and execution success

Agile effectiveness and Agile execution success require continuous integration, iterative development, and regular retrospectives. Agile maturity grows through learning, training, and adapting to changing business demands. Agile practices success is achieved by applying best practices, automation, and cross-functional teams. Agile planning success, Agile strategies, and Agile project success are supported by tools such as Jira, Trello, Smartsheet, and cumulative flow diagrams.

Driving Agile outcomes and performance improvement

Agile outcomes and Agile performance improve as Agile teams align objectives, reduce waste, and deliver value quickly. Agile software development success and Agile software success stem from consistent communication, stakeholder engagement, and a culture of continuous improvement. Agile transformation and Agile winning are driven by leadership, adaptability, and a commitment to customer success. Success in Agile is measured by successful Agile transformation stories, increased employee satisfaction, and organisational growth.

agileKRC has helped shape agile thinking by leading the teams that developed AgilePM® and PRINCE2® Agile. We take a practical, success-oriented approach. We begin by taking the time to listen and understand your needs, before offering our real-world experience and expert guidance.